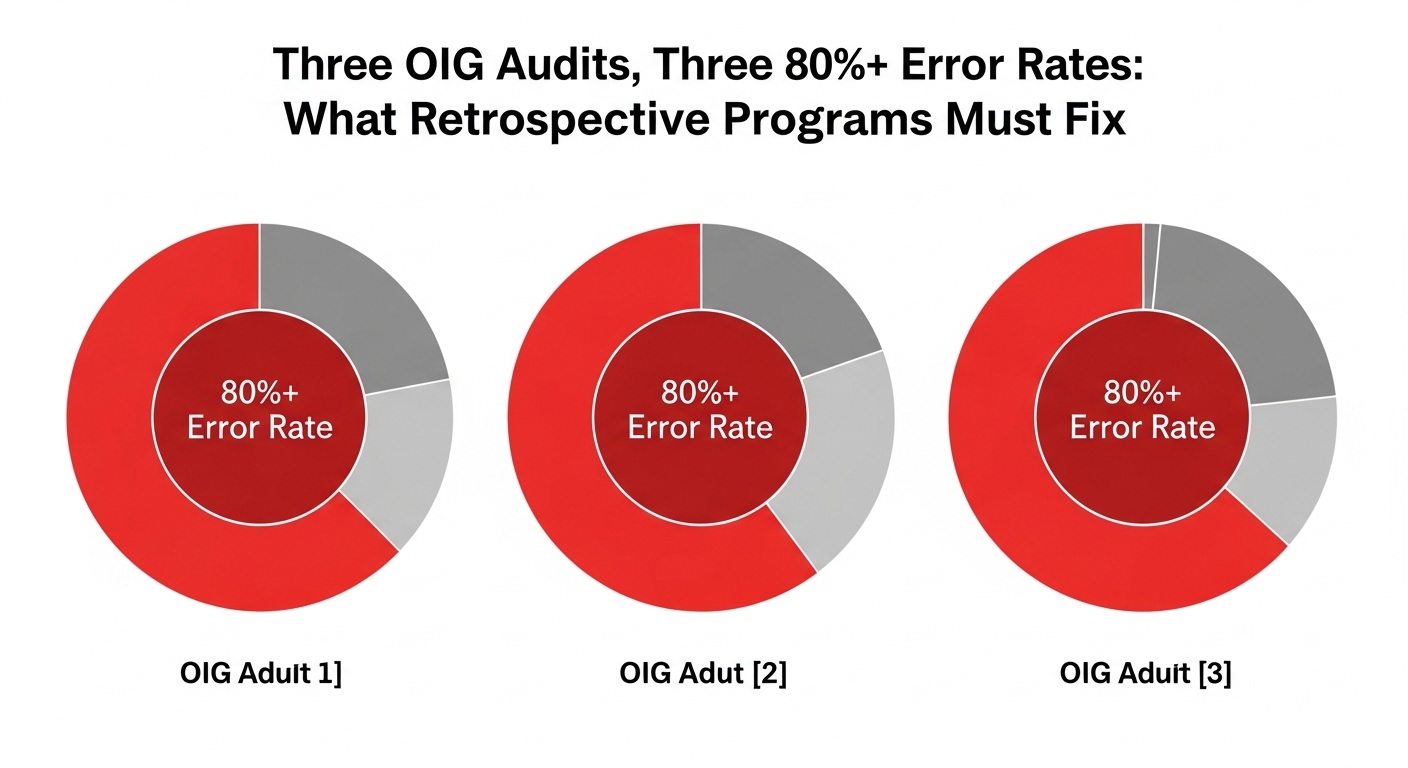

Three Audits, One Pattern

In March 2026, the OIG published audit findings for three Medicare Advantage organizations. BCBS Alabama (A-07-22-01207) had a 91% error rate across 271 sampled enrollee-years with $7.06 million in estimated overpayments. Priority Health (A-07-22-01208) had an 84% error rate across 300 sampled enrollee-years with $4.4 million in estimated overpayments. Gateway Health Plan (A-03-22-00004) had an 81% error rate across 286 sampled enrollee-years with $4.3 million in estimated overpayments.

The combined picture: across 857 sampled enrollee-years, more than 80% had unsupported high-risk diagnosis codes. The estimated overpayments totaled over $15 million across just three organizations and two payment years. Acute stroke and breast cancer categories hit 100% error rates in multiple audits. The most frequent failure pattern across all three: history-of conditions coded as active diagnoses without evidence of ongoing clinical management.

These aren’t anomalies. They’re the documented output of retrospective coding programs that didn’t validate documentation quality before submission.

The Common Thread: Documentation That Doesn’t Prove Active Management

In every audit, the failure mode is the same. A condition appears in the medical record. A coder assigns the corresponding HCC. The code gets submitted. And when CMS reviews the documentation, there’s no evidence the condition was being actively managed during the relevant encounter. The diagnosis is mentioned, but the note doesn’t show monitoring, treatment decisions, assessment, or any element that proves the condition is current and clinically relevant.

This happens because the coding process prioritizes identification over validation. The coder’s job, as defined by most program structures, is to find diagnoses in charts. The documentation quality question, whether the note meets MEAT criteria for that specific diagnosis, is either checked inconsistently or not checked at all. The volume of charts and the throughput expectations make thorough validation impractical without technology support.

The result is predictable: high submission rates, high error rates, and multimillion-dollar estimated overpayments. The coding programs did exactly what they were designed to do. They just weren’t designed for the standard CMS actually applies.

What the Fix Requires

Fixing this starts with embedding MEAT validation into the coding workflow as a mandatory step, not an optional quality check. AI that scans clinical notes and maps documentation to MEAT criteria gives coders the evidence assessment they need before making a submission decision. If the note shows monitoring activity, the system identifies it. If the note lacks any evidence of active management, the system flags the code as unsupported.

Two-way review adds the second layer. Programs that only look for codes to add will keep producing the one-directional coding patterns these audits exposed. Programs that evaluate existing submissions for removal candidates catch the history-of conditions, the resolved diagnoses, and the carried-forward codes that lack current clinical support.

Provider feedback closes the loop. When chart reviews identify documentation that doesn’t meet MEAT standards, that information should flow back to providers as specific, actionable guidance. Not “document better.” Rather, “this patient’s CKD was coded without a current GFR result or treatment plan.” Targeted feedback improves documentation quality at the source, reducing the volume of unsupported codes entering the system in the first place.

The Urgency Is in the Numbers

The three March 2026 audits represent a small fraction of the 550+ MA contracts CMS is now auditing annually. The error rates they found, 81% to 91%, suggest the problem extends far beyond three organizations. Any plan that hasn’t stress-tested its submitted diagnoses against MEAT criteria is operating without visibility into its own exposure.

Plans restructuring their programs around Retrospective Risk Adjustment Coding that validates documentation quality, codes in both directions, and builds evidence trails for every submission are directly addressing the failures these audits documented. The error patterns are clear. The financial consequences are public. The fix is known. The only variable is whether plans implement it before or after their own audit findings arrive.